As you might have expected after Wednesday’s post, I have also put a chart together that illustrates the Eagles Offense’s evolution in personnel over the Andy Reid era. Rather than doing a pure positional chart like before (I may still put that together for the offense), I decided to illustrate the Top 5 players from each year (by yards from scrimmage).

This gives us a better idea of the general focus of the offense each season, as well as showing the ebb and flow of each player’s career with the Eagles. Below is the chart. Please note that the only data included is for years in which the subject player ranked in the top 5 of yards from scrimmage for the Eagles. So if a player either doesn’t feature on the chart or has a year for which they are listed at 0 yards, it just means they didn’t rank in the top 5 of the team that year, not that they recorded 0 yards that year. Also, this highlights rushing and receiving yards, so QBs are not represented beyond their rushing contributions. Click to enlarge.

I know that’s not the easiest chart to read, so I labeled the major contributors. In general, as the chart moves from left to right, the legend (right) moves from the bottom to the top. Additionally, here is the data:

Takeaways:

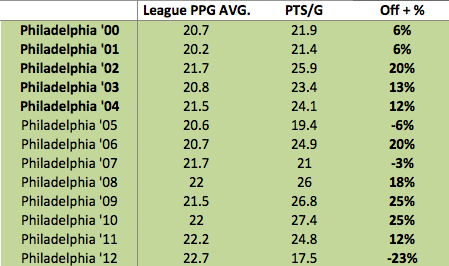

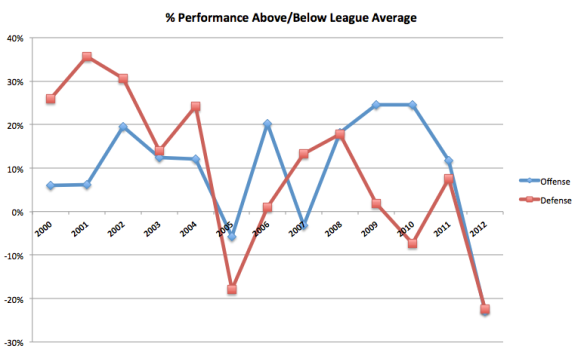

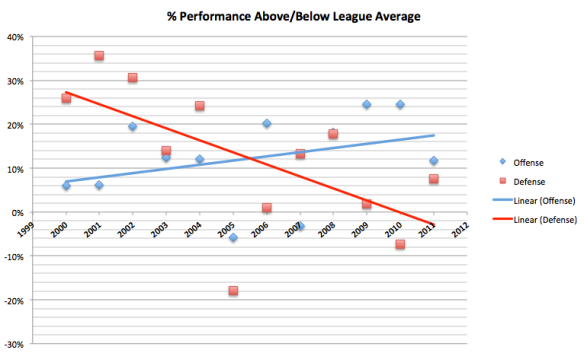

– Overall, we can see the gradual increase in total yards for the top 5 offensive players. As I illustrated last week, the Eagles offense improved over Andy Reid’s tenure, and not just as a result of overall league offensive inflation.

– The increase in overall yards coincides with an increase in the % of Total (seen at the bottom of the data table). This shows how the Eagles offense became more “playmaker” focused. In Andy Reid’s first few years, the distribution of offensive yards and touches was more widespread than in his later years. Obviously, the early years’ offenses did not include anywhere near the same level of skill as the later years’.

– Brian Westbrook jumps out immediately, and clearly held the largest non-QB offensive share during the Andy Reid era. Notably, LeSean McCoy looks like he had the potential to match Westbrook’s contributions, if Andy had continued to coach the team. It always bothered me that Andy didn’t feature Shady in the same way he did Westbrook. Part of that is because the Eagles now have DeSean and Maclin, and part is due to the fact the Shady is not as good a receiver as Westbrook was.

However, I would like to see Shady used as a receiver more often, and not just on designed screens or check-downs.

– As I already mentioned, the chart highlights the clear improvement in offensive talent over the course of the Andy Reid era. The main early contributors were James Thrash, Duce Staley, and Todd Pinkston; the late ones Shady, Jackson, and Maclin, with Westbrook in the middle.

Note though, that the Eagles best years under Reid featured Duce Staley as the biggest offensive weapon (outside of McNabb).

– The chart also illustrates the relative cameos made by random players like Dorsey Levens, Donte Stallworth, Antonio Freeman, Hank Baskett, and Greg Lewis. Remember them? They each had at one season in which they were a “top 5” offensive weapon for the Eagles.

– 2008 stands out as a major transition year for the offense; before then Westbrook was THE weapon, while after marked the fast transition to the current Eagles offensive era.

– At the moment, I expect the Chip Kelly era to bring more equality to the offensive yards distribution. That’s obviously speculative, but I believe we will see the “Top 5% of total” decline over the next year or two.

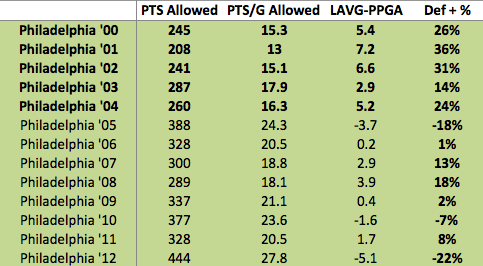

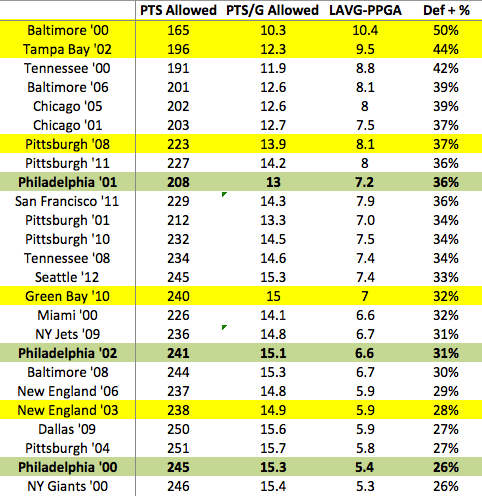

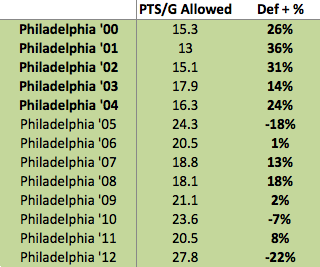

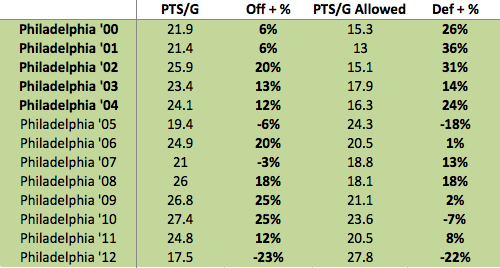

Given what we know about the correlation between Point Differential and Wins, we should expect to see something resembling an inverse relationship here (i.e. PA goes up = Wins go down). We do see some of that, but from ’06-’09 that relationship doesn’t hold. The biggest takeaway here is just how bad (and anomalous) last year’s performance was. From 2003 to 20011, the average Points Against for the Eagles was just 20.11, with a high of 24.3 in 2005. Last year the team allowed 27.8 points per game, more than a full touchdown per game over the long-term average.

Given what we know about the correlation between Point Differential and Wins, we should expect to see something resembling an inverse relationship here (i.e. PA goes up = Wins go down). We do see some of that, but from ’06-’09 that relationship doesn’t hold. The biggest takeaway here is just how bad (and anomalous) last year’s performance was. From 2003 to 20011, the average Points Against for the Eagles was just 20.11, with a high of 24.3 in 2005. Last year the team allowed 27.8 points per game, more than a full touchdown per game over the long-term average.