Today, with the help of a collaborator, I’ll give you prospect rankings for the NFL Draft that you won’t find anywhere else. As I’ve explained before, I am not a scout and have not watched film on every top prospect in this year’s draft. However, I believe what I’ll show you today is more useful than any individual scout’s ranking.

First things first, big thanks to George Laevsky (JD from Georgetown) for the help. He came up with the idea and name for the Positional Value Multiplier and worked with me on compiling/computing the necessary data.

To keep this clean, I’ll explain it in 3 sections. First I’ll tell you what we did, then I’ll tell you how we did it, then I’ll show you the results. That way, if you want to skip the middle section you can.

What We Did:

The overall aim of this project was to apply a positional value modifier to the consensus prospect rankings, with the hopes of generating a more accurate system of ranking value. We compiled a composite prospect rating for each player (through the first couple rounds) and then adjusted for positional importance according to last seasons’ league-wide positional salary distribution.

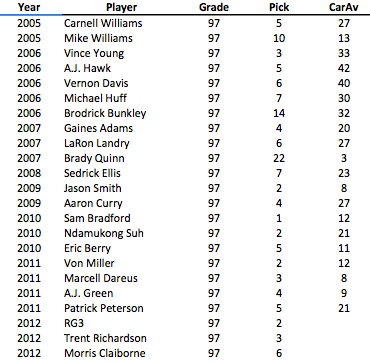

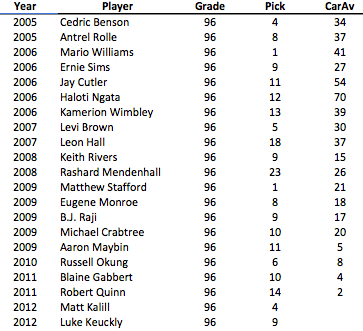

Before we go into the How details, here is the consensus prospect ranking using ratings from Scouts Inc (ESPN), the National Football Post, and NFL.com. Note: NFP uses a different grading scale, so those scores were adjusted to give us an apples-to-apples rating.

That graphic alone is pretty interesting, particularly when the ratings diverge (see Ryan Nassib at the bottom), but we’ll look at that some other time.

That graphic alone is pretty interesting, particularly when the ratings diverge (see Ryan Nassib at the bottom), but we’ll look at that some other time.

For today’s post, we have to adjust.

How We Did It:

I mentioned last week that no BPA ranking is complete without an adjustment for relative positional value. For example (an extreme one), if a QB and K both carry a 95 rating, you’d obviously choose the QB first. The question is, how do we measure relative importance by position?

While there is no bullet-proof method of doing so, the salary distribution in the NFL is as good a place as any to divine information from. In theory, since the NFL has a salary cap, the distribution of limited funds between positions will give us an idea of how the league, on average, values different positions in relation to each other.

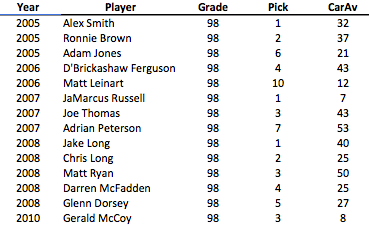

We pulled salary cap information from this awesome graphic featured in the Guardian at the end of January. It’s not perfect (reflects cap hits from last season and misses some IR guys), but in general I believe it’s as good a breakdown as any for our purposes today. After adjusting for the number of players by position, we calculated a Positional Value Multiplier (“PVM”) for each major position (FB, K, P not included). We then applied that multiplier to the above consensus rankings.

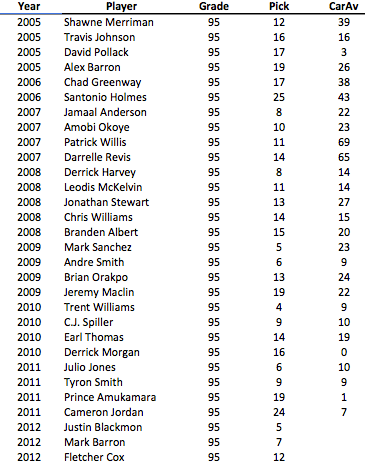

Here are the multiplier values we arrived at, in order from largest to smallest:

For the most part these make a lot of sense, based on what’s “common knowledge”. QBs are, by far, the most important position. However, the relative rankings of WRs and RBs certainly surprised me, though due to the noise in the data, it’s best not to get hung up on the minute differences in values above. Instead, we can see there are some clear “tiers” (I feel like I am using that term a lot).

Tier 1 – QBs

Tier 2 – WR, CB, DE, RB, DT

Tier 3 – OT, LB, TE, S

Tier 4 – C, G

The only thing in those rankings that immediately draws my attention is the OT position in the 3rd tier. But that data is what it is, we can debate the reasons later.

Now that we have the PVM values, we can apply it to the prospect rankings.

The Results:

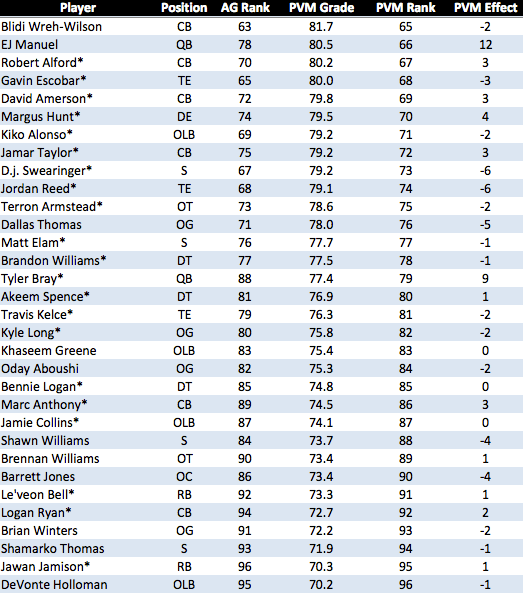

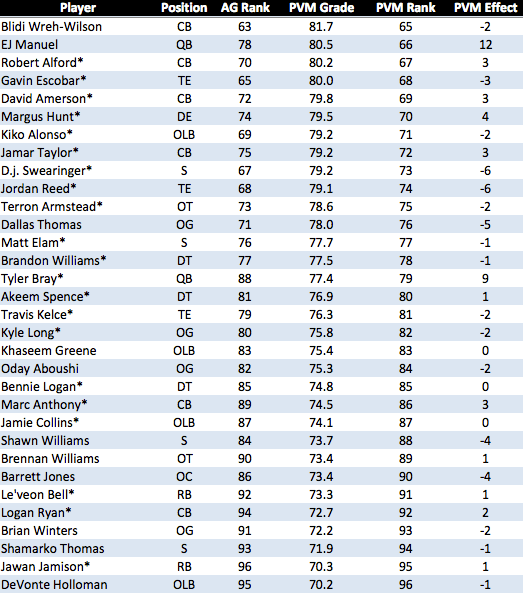

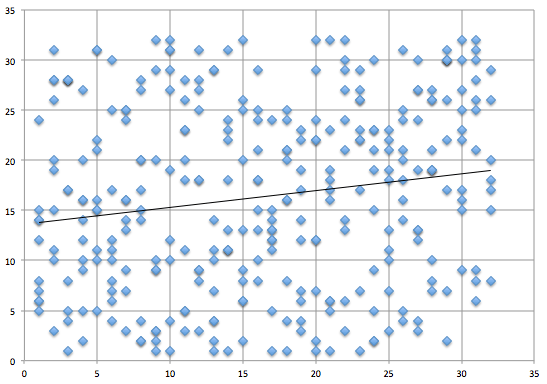

Some very interesting movement. The right-most column shows the effects of the positional modifier. The AG Rank column is the pre-adjustment consensus ranking.

Notes:

– Dee Milliner jumps two places to become the top overall prospect.

– Chance Warmack, though he drops 3 spots, remains a top 5 prospect, damaging my belief that a G in the top 15 picks is a very poor decision.

– QBs, as expected, benefit the most. Geno Smith jumps 11 spots to become a top ten prospect, while Nassib and Barkley move into the middle of the first round.

– The biggest jump overall comes from Tyler Wilson (20 spots), who moves from the middle of the second round to the end of the first.

– Zach Ertz (TE) and Jonathan Cyprien (S) are hurt the most, falling out of the first round, and therefore off the chart above.

One last thing: I want to be perfectly clear about the value of this analysis. The idea here is that BPA is an overly simplistic and flawed method of drafting by its current definition. For example, while Geno Smith (19th consensus) may be a worse prospect than Kenny Vaccaro (9th consensus), with lower odds of success, the potential payoff is so much greater for Smith that he becomes a better choice (at least as shown here). Hitting on a QB offers a MUCH greater reward than hitting on a S (or really any other position), so it makes complete sense that QBs are perennially “over-drafted”.

In essence, what we are showing here is that they are not, in fact, “over-drafted”. Yes, they might have greater odds of failure, but that does not make them bad picks. Remember, you have to look at both Risk AND Reward, balancing the two. The above rankings is an effort to do that in a method as simple and transparent as possible.

Over the past few months, I’ve tried to advance the idea that the “consensus” forecast should carry a large degree of inertia within NFL front offices. Imagine the above rankings as equivalent to a total market stock index. For anyone going against the total market index, they must believe VERY STRONGLY that they have better information or better analysis than the rest of the market. It should function in much the same way in the NFL (and all professional sports leagues). The idea is NOT that teams should blindly follow the “market”, just that they should hold their own evaluations up to very high scrutiny before acting on them, especially when they largely conflict with available data.

I’ll be examining this in a lot more detail, which may or may not lead to more posts on the subject. In any case, this should give everyone something to think about come draft day.

For what it’s worth, my subjective pick for the Eagles would still be Lotulelei/Joeckel. However, unless I adjust the PVM formula (or if the consensus ratings change), it looks like Dee Millner is now, objectively, in the lead.

UPDATED: Also, below is the same analysis for the rest of the players we looked at: